Late on a Sunday afternoon, a monitoring system flagged a CPU spike on one of our shared hosting servers. What started as a routine investigation turned into a multi-hour battle with OpenAI's GPTBot crawler, and ultimately required a formal complaint report and a firewall-level block to resolve. This is blatant GPTBot abuse. Here's exactly what happened, how we diagnosed it, and what we did to stop it.

The Alert

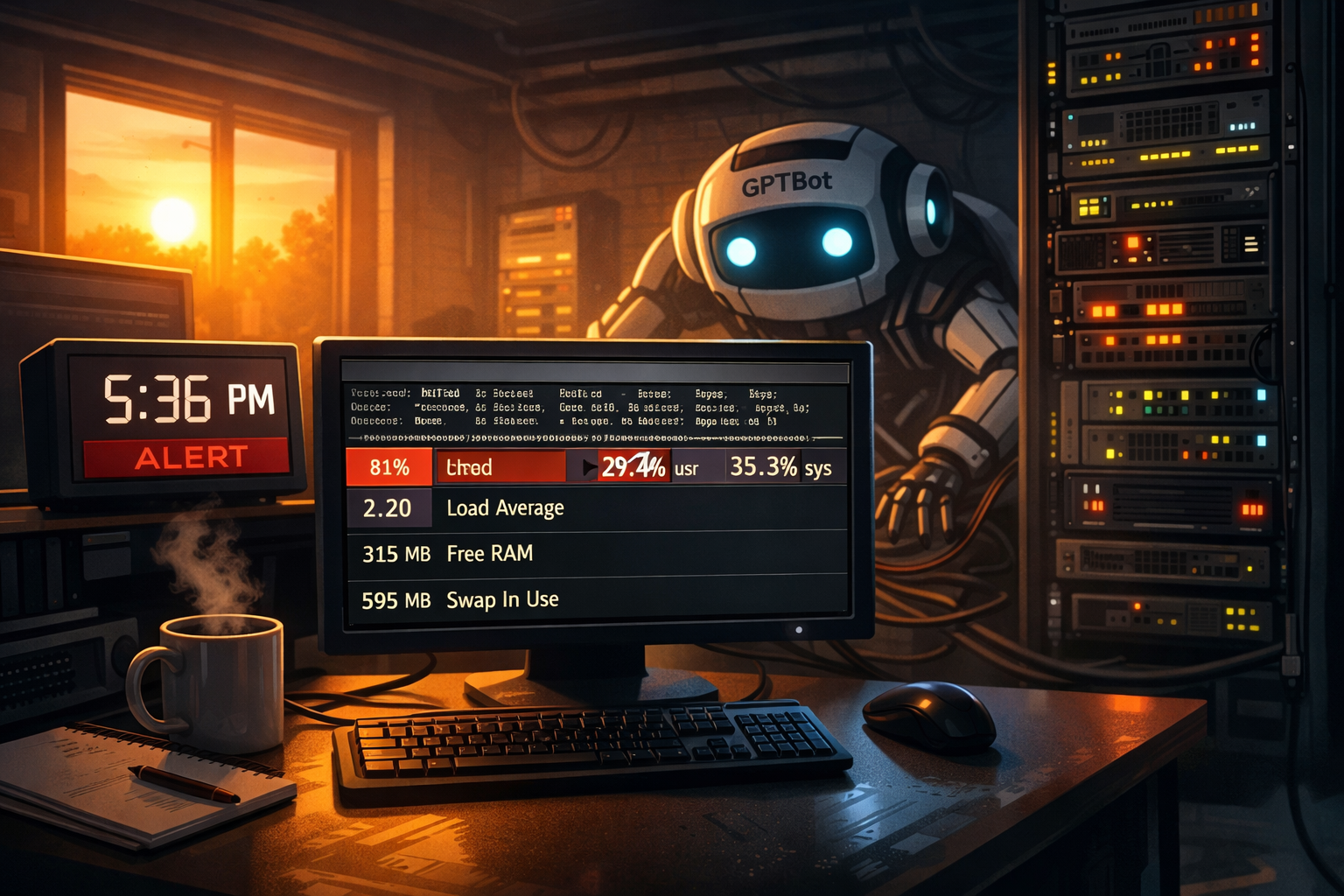

At 5:36 PM PST, our automated monitoring triggered a CPU spike alert on a clients VPS server:

The top output showed an httpd process consuming 6.2% CPU with a telling breakdown: 29.4% user time alongside 35.3% system time. That high sys% pointed to something beyond normal PHP processing — excessive process forking, I/O pressure, or socket activity were all on the table.

Isolating the Culprit

First step was to check how many php-cgi processes were actually running:

ps aux | grep php-cgi

domain+ 1172768 94.0 2.4 274188 90880 ? R 17:52 0:00 /opt/cpanel/ea-php83/root/usr/bin/php-cgi

macron 1172771 0.0 1.0 323896 38844 ? R 17:52 0:00 /opt/cpanel/ea-php83/root/usr/bin/php-cgiOnly three processes total — but one, belonging to the domain.com cPanel account, was running at 94% CPU. This wasn't a flood of processes; it was a single request so expensive it was saturating the server on its own. The investigation narrowed to one account's Drupal install.

Finding the Access Logs

The logs weren't in the expected location, so we tracked them down:

find /usr/local/apache/domlogs -name "domain*" 2>/dev/null

/usr/local/apache/domlogs/domain.com-ssl_log

/usr/local/apache/domlogs/domain.com-bytes_logTailing the SSL log immediately revealed the pattern — every single request came from the same IP, with the same User-Agent string:

74.7.227.161 - - [22/Feb/2026:17:51:46] "GET /region/town/services/event-entertainment HTTP/2.0" 404 - "GPTBot/1.3"

74.7.227.161 - - [22/Feb/2026:17:52:04] "GET /region/town/valet-parking HTTP/2.0" 404 - "GPTBot/1.3"

74.7.227.161 - - [22/Feb/2026:17:52:13] "GET /region/town/pet-friendly HTTP/2.0" 404 - "GPTBot/1.3"GPTBot/1.3, OpenAI's web crawler. And every URL it was requesting returned a 404. These pages don't exist on the site.

The Scale of the Problem

# Total requests from this IP

grep "74.7.227.161" /usr/local/apache/domlogs/domain.com-ssl_log | wc -l

4475

# When did it start?

grep "74.7.227.161" /usr/local/apache/domlogs/domain.com-ssl_log | head -1

74.7.227.161 - - [22/Feb/2026:13:49:15] "GET /...//personal HTTP/2.0" 302 ...

# Total bandwidth consumed

grep "74.7.227.161" ... | awk '{sum += $10} END {print sum/1024/1024 " MB"}'

31.3459 MBOutput:

4,475 Total Requests: ~19/min

Sustained Rate: 31.3 MB

Bandwidth Used: 4,362

Were 404sThe crawl had been running since 1:49 PM — nearly four hours — and was accelerating. From 71 requests in the first hour to over 1,500 per hour by the afternoon. Of the 4,475 total requests, 97.5% were to pages that don't exist.

The Fabricated URL Problem

URLs like /region/town/room-service or /region/town/valet-parking were algorithmically constructed by appending plausible-sounding slugs to known pages. One request even contained a double slash, /services//personal a telltale sign of programmatic URL construction rather than real link following.

Each of these non-existent URLs triggered a full Drupal PHP execution cycle to render the 404 page. With 19 requests per minute sustained, this created a continuous stream of expensive PHP processes with no legitimate purpose.

Attempting the .htaccess Block

The first remediation attempt was a User-Agent block inserted into the site's .htaccess immediately after RewriteEngine on:

# Block GPTBot by User-Agent

RewriteCond %{HTTP_USER_AGENT} GPTBot [NC]

RewriteRule .* - [F,L]The rule inserted correctly and appeared syntactically valid, but GPTBot continued to get through, now returning 500 errors instead of 404s, which was actually worse. Apache on cPanel was not processing the rewrite rule as expected for this account configuration.

Why .htaccess Failed (And Why It Never Could Work)

A critical insight from this incident: the .htaccess rewrite approach was doomed from the start. Understanding why will save you significant wasted effort.

Rewrite rules can't block requests to URLs that don't exist, because the 404 is generated after the rewrite rules have already been processed.

The Request Flow

Request → Firewall (CSF) → Apache → mod_rewrite (.htaccess) → Drupal bootstrap → 404 pageBy the time mod_rewrite sees the request:

- Apache has already accepted the connection

- Loaded the

.htaccessfile - Processed all rewrite conditions

What Actually Happens with Fabricated URLs

Step | What Processes | Result |

|---|---|---|

1 | Firewall (CSF) | Passes (IP not yet blocked) |

2 | Apache accepts connection | Connection established |

3 | mod_rewrite checks rules | No matching rewrite target |

4 | Drupal bootstrap loads | Full PHP execution |

5 | Drupal routing | No matching route found |

6 | 404 page rendered | Full PHP execution continues |

7 | Response sent | 404 status code returned |

Every single fabricated URL triggers the full Drupal bootstrap—all 10,000+ PHP files loaded, database queries for routing, and the 404 page rendering—before Apache ever decides "there's nothing here."

Why This Matters

- The

.htaccessblock appeared to fail—it never had a chance to stop the request early - The CPU was still spiking—Drupal was still doing full work for every fabricated URL

- Only the firewall worked—it stopped the request at step 1, before Apache ever saw it

Common Misconception

Many believe .htaccess can block bad requests early. In reality:

mod_rewriteexecutes after the request is accepted—Apache has already committed resources to the connection- 404s are generated after the application bootstraps—in Drupal's case, that means full PHP execution through the entire stack

RewriteCond %{REQUEST_FILENAME} !-fchecks if a file exists—fabricated URLs always return false, so the request proceeds to the application

The Critical Takeaway: The firewall block wasn't just the nuclear option—it was the only option that could actually stop the attack at the right layer. The .htaccess approach was like locking your car door after the thief is already in the driver's seat.

Escalating to the Firewall

With the .htaccess approach ineffective, I moved to a firewall-level block using CSF:

csf -d 74.7.227.161 "GPTBot abuse domain.com"This drops the connection at the network level before it reaches Apache, zero PHP execution, zero CPU cost. The log went silent for that IP immediately and CPU returned to normal within minutes.

Timeline of the Incident

- 13:49 PST First GPTBot request logged. 71 requests in the first hour, all probing fabricated URLs.

- 15:00 PST Crawl accelerates to 915 requests/hour. Server load begins to climb.

- 17:36 PST CPU spike alert triggered. Server at 81% CPU, php-cgi process at 94%.

- 17:52 PST Root cause identified as GPTBot crawling domain.com account.

- 18:11 PST .htaccess block inserted. GPTBot continues, now returning 500 errors.

- 18:29 PST Second CPU spike alert. .htaccess block confirmed ineffective.

- 18:33 PST Firewall block applied via CSF. Traffic from 74.7.227.161 drops immediately.

- 18:35 PST Server CPU normalises. Incident resolved.

Producing a Formal Complaint

Given the scale of the crawl, sustained resource abuse, fabricated URL probing, and likely harvesting of proprietary content for AI training without consent. A report was prepared a formal complaint report on behalf of the site owner for submission to OpenAI, documenting the full evidence including timestamped log data, bandwidth figures, response code breakdowns, and the fabricated URL behaviour.

Final Resolution Checklist

- GPTBot blocked at firewall level via CSF — IP 74.7.227.161 dropped at network layer

- .htaccess rewrite rule left in place as a secondary defence layer

User-agent: GPTBot / Disallow: /added to robots.txt as formal opt-out on record- Runaway php-cgi processes terminated to restore normal server operation

- Formal complaint report prepared and submitted to OpenAI

Why Was This Site Targeted So Aggressively?

The content is training-data gold. Thousands of individual structured pages with unique content is highly valuable for AI training datasets. GPTBot prioritised the site accordingly.

A well-formed sitemap acted as an invitation. A clean, extensive sitemap told GPTBot exactly how many pages existed and gave it a roadmap. Sites without proper sitemaps tend to get crawled more superficially and quickly abandoned.

The fabricated URL probing is self-reinforcing. Once GPTBot determined the site was valuable, it began speculatively probing for content it predicted should exist. Even 500 error responses signal "this domain is active" and encourage continued crawling. A clean firewall drop is the correct signal to send.

Recommendations for Site Owners

- Add explicit

User-agent: GPTBot / Disallow: /to yourrobots.txtif you don't want OpenAI harvesting your content - Enable Drupal's Page Cache and Dynamic Page Cache modules — cached responses are dramatically cheaper to serve

- Consider a static 404 page that bypasses PHP entirely for unknown URLs

- Set up rate-limiting in CSF or fail2ban to automatically flag IPs exceeding a threshold of requests per minute

- Monitor your access logs periodically for sustained single-IP activity — 19 requests per minute from one source should always trigger an alert

Recommendations for Server Administrator

Enable and configure php-fpm

Typical settings for php-fpm on a VPS server with 4G of ram.

; Process Manager Settings

pm = dynamic

pm.max_children = 25

pm.start_servers = 5

pm.min_spare_servers = 5

pm.max_spare_servers = 10

pm.max_requests = 500

; Timeout Settings

request_terminate_timeout = 90s

request_slowlog_timeout = 5s

slowlog = /var/log/php-fpm/slow.log

; Resource Limits

rlimit_files = 131072

rlimit_core = unlimited

; Process Idle Timeout

pm.process_idle_timeout = 10sConfiguration Explanation:

Parameter | Value | Purpose |

|---|---|---|

| 25 | Maximum PHP-FPM processes running simultaneously. Calculated as: |

| 5 | Initial processes started (20% of max_children) |

| 5 | Minimum idle processes ready for traffic spikes |

| 10 | Maximum idle processes to prevent resource waste |

| 500 | Recycles processes to prevent memory leaks |

| 90s | Kills stuck processes after 90 seconds |

You can find helpful files to update the php-fpm configuration on iT-werX GIST.

Tuning OPcache for PHP Performance

While PHP-FPM manages your processes, OPcache manages your compiled PHP code. Without it, PHP reads and compiles every script from disk on every single request. A massive waste of CPU and I/O . With it, compiled bytecode is stored in shared memory, ready for immediate execution . After the GPTBot attack, ensuring OPcache is properly tuned helps your server handle legitimate traffic spikes more efficiently.

What OPcache Does

When a PHP file is first executed, PHP compiles it into bytecode. OPcache stores this bytecode in shared memory. On subsequent requests, PHP uses the in-memory version, bypassing disk reads and recompilation entirely . For a CMS like Drupal or WordPress, which loads hundreds of files per request, this is transformative.

Checking OPcache Status

First, verify OPcache is enabled and see its current configuration:

Create a temporary phpinfo.php in the domain's document root:

<?php phpinfo(); ?>- Access it via browser (https://yourdomain.com/phpinfo.php) and search for "Zend OPcache".

- Delete the file immediately after checking for security.

If OPcache isn't enabled in WHM, go to MultiPHP Manager and ensure the OPcache extension is selected for your PHP version. You may have to provision again using EasyApache 4 and rebuild the server that way. It is highly recommended to stick with using WHM if it is what your server us using.

Where to Configure OPcache in WHM/cPanel

A critical nuance: OPcache settings are PHP_INI_SYSTEM directives . This means they cannot be set per-domain via the MultiPHP INI Editor in cPanel. They must be set globally for each PHP version.

The correct file is: /opt/cpanel/ea-php83/root/etc/php.d/10-opcache.ini (adjust the PHP version path as needed) . Edit this file directly as root.

Recommended OPcache Settings for a 4GB VPS

After editing 10-opcache.ini, here are the values we recommend for a server with 8 domains running on 4GB RAM:

Parameter | Value | Purpose & Calculation |

|---|---|---|

| 1 | Turns OPcache on. |

| 256 | Total memory (MB) for storing compiled scripts. Start with 256MB for a 4GB server. Monitor |

| 16 | Memory (MB) for interned strings. A higher value can improve performance for apps that use many identical strings. 16MB is a good starting point . |

| 20000 | Maximum number of PHP files (scripts) to cache. A clean WordPress install can have 1000-2000 files; with plugins and a framework like Drupal, 20,000 is a safe baseline. Monitor |

| 1 | When enabled, OPcache checks if files on disk have been updated. Leave this on in most environments so code updates are reflected without a full service restart . |

| 60 | How often (in seconds) to check for file changes. Setting this to |

| 1 | Enables a faster shutdown sequence to free memory more quickly. Recommended . |

| 1 | Keeps doc comments in the cached code. Required for annotations used by many libraries and frameworks (e.g., Symfony, Laravel). Leave this enabled . |

Applying Changes

Restart the Apache server:

/scripts/restartsrv_apache_php_fpm restart